The verdict is in & the fight is on.

Social media lost BIG in court this week, but the same playbook is already running on AI.

Happy Big Tech karma’s a b*tch day to all who celebrate. In a rare moment of good news, big tech companies were found guilty in two trials, in 2 different states this week.

In California, jurors decided that Meta and YouTube were negligent in the design and operation of their platforms, and have to pay $6 million in damages. In New Mexico, a jury found that Meta “knowingly harmed children’s mental health and concealed what it knew about child sexual exploitation on its social media platforms,” and was ordered to pay $375 million in damages. The jury agreed with prosecutors that Meta “engaged in ‘unconscionable’ trade practices that unfairly took advantage of the vulnerabilities of and inexperience of children.”

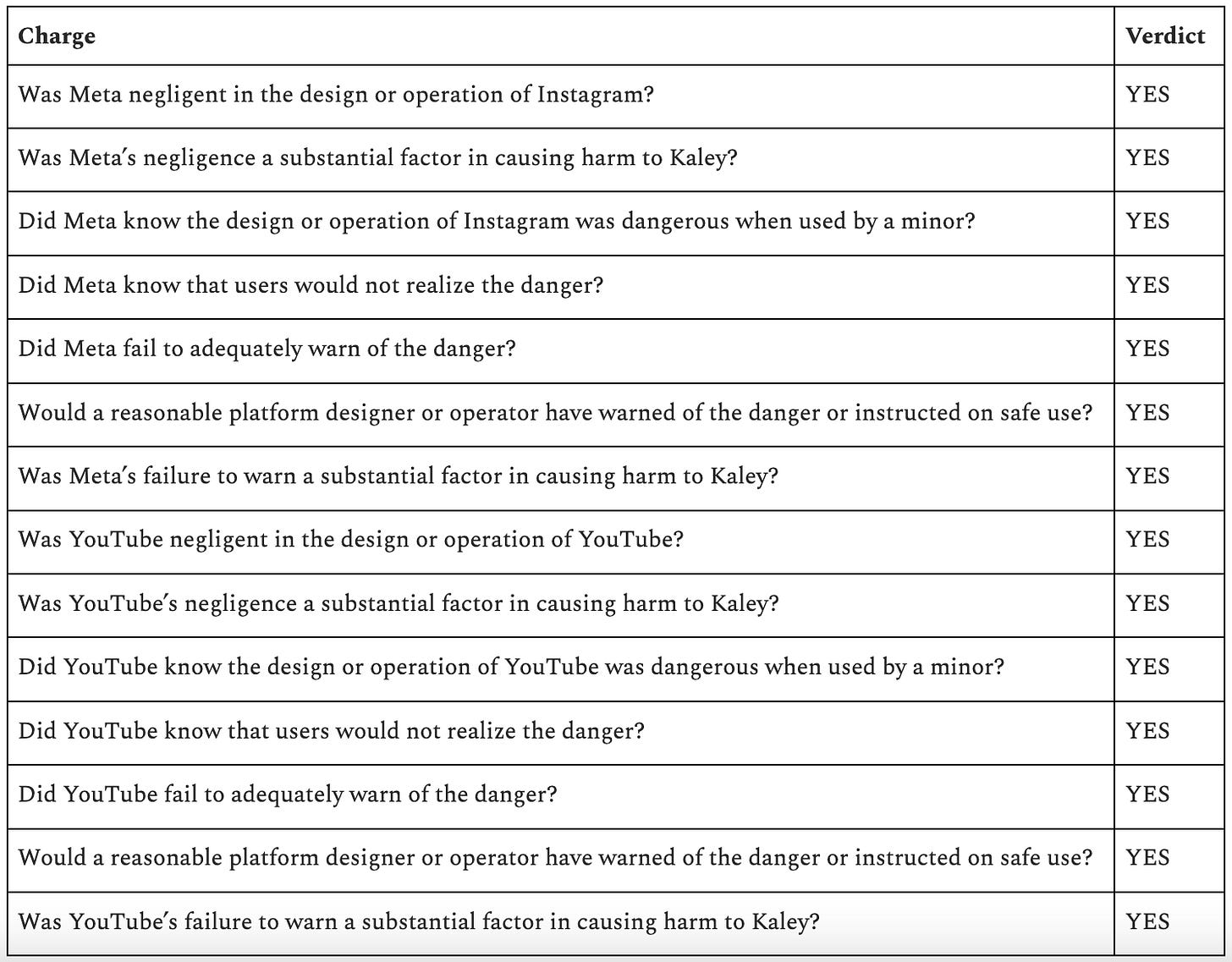

I especially enjoyed reading the specific charges in the LA case, which I have now read like 50 times. It’s hard to believe, but wow.

As Nicki Petrossi said: “They knew it was dangerous and they did it anyway. We knew that… and now everybody knows that.” AMEN, Nicki.

The lead lawyer for the plaintiff called it “the engineering of addiction” and focused on 4 features of the platforms:

Infinite scroll: Exactly what it sounds like… the feed with no stopping point. It can keep going on forever.

Algorithmic recommendations: Content that the platform suggests for you, based entirely on the aim of keeping you watching / scrolling.

Autoplay: Content that keeps appearing without a prompt. Your video ends, and the next one that the platform selected for you starts automatically, without you touching anything.

Constant notifications: Pings of various kinds that are unprompted by you. The platform is essentially calling for your attention… “Ping ping! Come back! Ping ping! Look at this!”

These are right on, and I am cautiously optimistic. Yet while I am thrilled about the outcome here, I am seeing a reaction that makes me a bit nervous we may win a battle but lose the war.

I’m seeing post after post proclaiming “it’s their fault!” and while my first instinct is F*CK YEA, it’s really not entirely true. Companies did what companies do. These companies behaved in a completely predictable way, and that is why we have public institutions — to push back and provide some sort of constraint. And yet… public officials have allowed and even enabled their behavior. Those who have managed to make progress at that federal level (like at the FTC and CFBP) have been gutted.

As I said last year, the uncomfortable reality (and much harder problem to solve) is that in many ways, we allowed this to happen, and even enabled it. And when I say “we” I don’t mean you or me… I mean the collective WE: the people who design and create the products, investors that finance and incentivize them, the media that covers and hypes them up, parents who use them as babysitters because in a pinch an ipad is really helpful and how the hell else are we supposed to get everything done, and government officials who have given a hall pass to an entire industry to develop addictive garbage technology that atrophies our brains, divides our communities, and warps our relationships with ourselves and each other.

These two are the first of what will be an avalanche of cases, so there’s lots to follow in the weeks and months ahead, and I hope we continue to celebrate. At the same time, let’s remember that the behavior of these companies and the design of the products are not unique to social media—it is persistent across the tech industry. Everything we are worried about with social media is happening RIGHT NOW with AI, at a seismically larger and faster scale. And yet… AI is being integrated into products that we already use (often with no opt-out), and forced into hospitals, schools, and anywhere else that there’s a large user-base with a trove of data.

There’s a lot we don’t know about AI, but there’s also a lot we DO know. We are currently picking up the pieces from what big tech has gotten away with over the past 20 years. Let’s not make the same mistake again.

More on this topic…

Detailed coverage of the trial by the Heat Initiative + Scrolling2Death, along with an emotional reading of the final verdict.

A reference guide for how existing laws apply to AI chatbots.